Instead of just saying "LLMs work way better now", I thought that it would be interesting for once to show something more "applied".

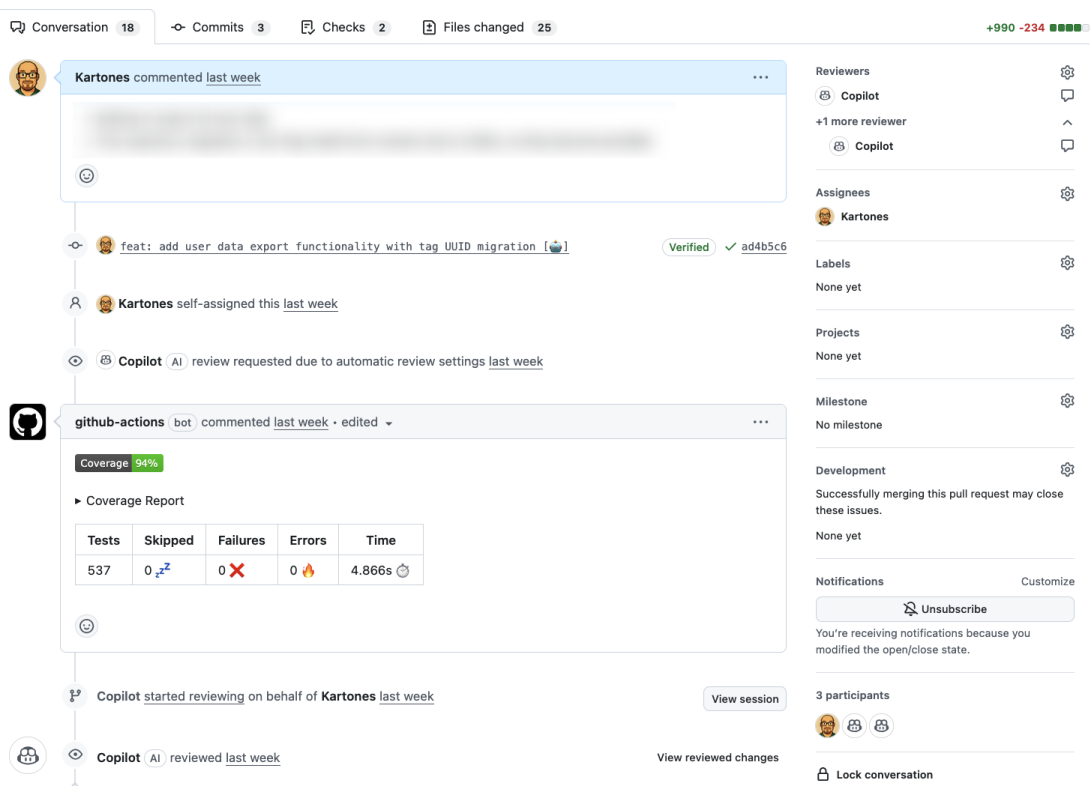

First, a screenshot:

This is a GitHub pull request of one of my pet projects (private, at least for now). I haven't manually typed a single line of code, it is 100% prompted on purpose. It sits at around 11k LOC at the time of this writing, ~62% of them tests.

I'm using Claude Code and GH Copilot through VS Code (Sonnet 4.x models). I then get AI reviews by combining GH Copilot Reviews and a times a local custom subagent, and resolve them either with "GH Copilot agent requests" or locally via Claude Code and pushing the changes.

I still review the code, but I am starting to review less the tests' logic. I quickly look at them, and mainly focus on the test cases that my testing subagent came up with, and the Python one built. Agents mimic really existing tests and code patterns, so given a few tests, they can go on their own with the same quality. From time to time, I do check the tests, and often find fixtures that can be extracted, or small improvements. Agent are not yet good code janitors, at least without very specific prompting (I have some general guidelines as instructions, but to not much effect).

The PR review feedback that I get from Copilot is still not amazing, but aleady at a level that points towards general best practices and potential security issues (mostly related to input validation, at least in this project). I miss a deeper, more exhaustive feedback at times, though. I have a code reviewer subagent for those in-depth analysis cases, as simply by being run locally with full source code access gives you significantly better insights.

What I do to be comfortable not checking the tests in depth is simple: +500 tests; mostly unit, fewer integration ones, all very fast (< 5 seconds total). And 94% coverage. Both apply to the backend code, as the frontend in this project is right now a throwaway barebones UI. MVC controllers ("web routers") are thin, so apart from sanitizing input, they just call business logic; there's little real value in testing them right now.

The backend (Python) is heavily modular, with a lot of Dependency Injection, to the point that there are only two mock.patch occurrences in the whole codebase. It also uses a few classic patterns, and all files are small and with clear naming, so a fresh Agent session very quickly finds everything.

Another thing that I have done is iterate a lot, both with the initial architecture and logic, and with my instructions, skills and subagents. I still iterate on them, but now that they tend to trip both the linters and my rejections less often, I have higher confidence 😄. Partially it is due to now having reference code to use as guidance (vs more detailed prompts at the beginning), but when I add something completely new, the output also looks better.

I always ask the agents either small tasks, or big ones with multiple detailed steps, executing and validating them one by one. I don't buy the "you can one-shot everything", not even when I use Opus models. If I don't define what I what with some guidelines of the how I want it, I get subpar results, except with some obvious simple tasks.

For now, it still looks clear to me that if you let agents fully loose, you'll get a working but standard to suboptimal code. And they have bugs now and then, so with tests, they course-correct and eventually reach a working solution most of the time on their own. I'd say that doing TDD (Test-Driven Development) is more valuable than ever.

Recommendations

For once, a few personal recommendations (not dogmas, take them or ignore them):

- Tests are a basic pillar for agents

- TDD means tests before code, so it is a good way of achieving working non-test code earlier

- Tests without coverage might be false security

- Agents follow existing code and test patterns, so build a good enough foundation, and you'll get way less "AI slop" (almost none?)

- You can't yet trust the code agents generate as fully functional. Always review the main code changes

- You can now trivially get working code, but, as Andrzej Tuchołka wrote in his book, full reliance on LLMs means converging to a common solution that might not be the best quality one

- A basic code review is better than no code review

- Small iterations, incremental changes, MVPs work for one-shotting

- I maintain my point of view of coding agents being like a senior engineer with goldfish memory that only wishes to close tickets as fast as possible, even if that means bullshitting you. [1]

Let's see how things advance during the year (2026).

Notes

[1] I originally wrote "... even if that means lying to you". I did the experiment of asking Claude for feedback on the sentence, and its answer was: ""lying to you" implies intent. If that's deliberate, keep it. If you want accuracy, "even if that means bullshitting you" is closer - it captures the confident wrongness without implying the agent knows the truth and hides it. Frankfurt's definition of bullshit is literally "indifference to truth," which fits agents well." 😄