Walking back from work to home I recently listened to a Rework's podcast titled You need less than you think, which made me remember of a few technical examples that also fit into that topic.

Agility

When I started studying computer science, we were taught only about the classic and dreaded waterfall model, and although much has changed since then and I also had the luck of learning TDD and XP in 2004 and apply it to my work sometimes, we're midway through 2018 and still too many startups and in general tech companies struggle to generate value fast enough to be really considered agile (in my opinion). I've experienced both small and mid-sized tech teams struggling to deliver products in time, but I've also experienced the opposite: Very small teams (like 3 engineers at Minijuegos.com counting the CTO) and workforces of +100 engineers (like Tuenti when it was a social network), where we were able to deliver what I consider high quality and complex products in very short time periods.

But those two examples I mentioned are outliers. Usually you either get into big, long projects, or instead accomplish tiny projects without an ambitious scope. At a previous job, we had a real need to be agile: We were an early stage startup, with some initial funding but the need to build the product and start generating money [1], a small team (we peaked at ~10 engineers IIRC), and some "critical" requirements like being "highly scalable" from day 1.

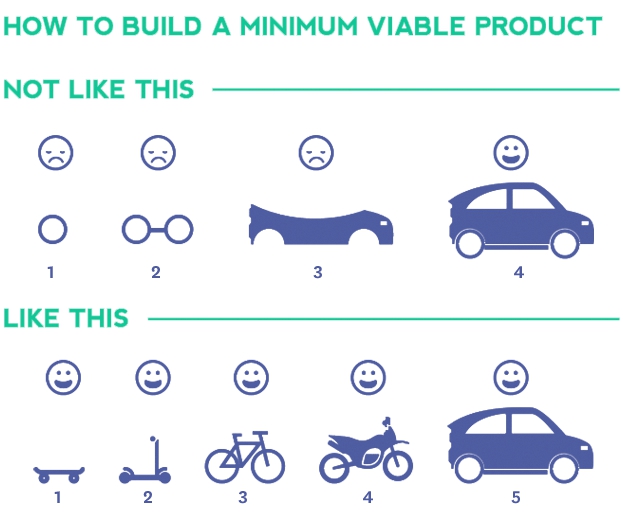

I had the luck of working with eferro, who tries to be agile, pragmatic and iterative when building products, and he seeded us with the concept of building MVPs (Minimum Viable Products). I'm not going to enter into details of how it works but instead use a classic picture that perfectly represents it:

We tried to be extremely pragmatic whenever possible, and even iterated through some existing services we had built in order to simplify them, as we moved towards a fully automated platform (from infraestructure to every service, everything was triggered through code, had no intermediate manual steps). I'm going to talk about three scenarios where we built MVPs that some people would consider almost "hacks" but worked so well in our scenario.

#1: You don't always need users

When we got our first paying clients, we not only manually billed them, but also didn't monitor usage of the platform per user, because we didn't actually had users.

We reached the point where the platform could work as an API-based self-service so product came with the need of "adding users so we can bill them". But we still had still lots of critical pieces to build (like an actual web!), so we discussed a bit around what was needed, and came upon the real need: "we need a way to track usage of the platform per customer to charge them appropiately". Look at the tiny critical detail: If we were able to somehow track that usage without actual users, as long as we provided accurate metrics, it would be fine.

We had the following data pieces:

- we knew the email of the customer as we were sending them a notification when the job was done

- each customer had an API key (which was a simple config python dictionary with an

api key -> emailmapping) - we were gathering metrics, just per job instead of per user

- our AWS SQS message-based architecture allowed to easily add any new field to the messages without breaking existing services (e.g. add a correlation id that travels and marks the full journey of a job)

What we decided to do is, at the API, build an SHA hash of the API key per job as our "user id", add it to the messages, and implement a quick & simple CSV export job that would be manually triggered and would return a list of all the job metrics for a given user email and start-end datetime range.

This approach allowed us to keep building other pieces for a few months, until we really had to add a user service to the platform.

#2: You don't always need a database

The platform we were building allowed to customize some parameters based on templates. Those templates were displayed at a small website, like a customer-facing catalog, and also could used to do single task jobs as demos.

- Some data was stored at PostgreSQL, while other was read from AWS S3. You always had to query the DB to just display a few items, but you also always had to fetch metadata files plus the template actual data.

- Having to work with a Python ORM (SQLAlchemy in this case) to perform such trivial queries (we didn't even had search) was overkill

- We had sample videos showcasing some templates, which were created by the template builders but needed to manually be resized (and weren't optimized)

None of it would initially be a deciding factor to rewrite the internals of the service, but combined made this apparently trivial system a huge source of pain for our "internal users" (the template makers), as they would had changes made but not reflected on this site and had to do lots of trial and error an manual corrections.

We also had less than a hundred templates, with not so many variants available, so why having to mess up with a DB for a few hundred "rows" of total data?

What we did was:

- Revisit all the S3 policies to ensure consistent metadata + data files. Either you got the newest version "of everything", or would get the previous version when calling the service from jobs.

- Create a script that, when (manually) run, would reencode the chosen FullHD sample video at a configured web resolution and optimize it a bit (mostly remove audio track and reduce some frames)

- Remove the database, using a JSON file "per table"

S3 scales insanely well so we got rid of the ORM and of having to setup and use any database engine... And the code got really simple, up to the point that data reading methods became mere "dump this json file contents to the output" or "load this json and dump item X".

Local development also got faster, now being so easy to understand, test and extend.

Later this demo website was removed and a template service was developed, both to serve the main API and the would-be self-service webpage. I proposed for it to be also DB-less but the service owner decided to build and keep it relational just in case.

I like relational databases and think they are quite useful in many, many scenarios, but I've also realized that sometimes, if you can rely on something that "scales well", maybe there's a simpler solution removing the need of adding a DB, at least for the first MVPs.

#3: You don't always need a relational database (nor a NoSQL one)

This was an evolution of the second example. We had a distributed job coordinator, a single process that had to read and manage state of thousands of messages whenever the platform had work to do. The state was stored in a PostgreSQL database, and while the DB wasn't the main problem, it was also quite overkill for a single state storage.

We were also doing on-call, so after suffering a fun night with the service crashing multiple times, we decided to rewrite it, and came up with a much simpler solution: Keep a simple structure of general job data and individual job items/tasks, wait until every item has either finished or failed X times (we had retries for some tasks) and just persist everything into AWS S3 (taking care of the by default eventual consistency I/O) in plain JSON files.

Something like this:

job {

id: "...",

...

tasks: {

"task-id-1": null,

"task-id-2": "ok",

"task-id-3": "ko",

...

"task-id-N": null,

}

}

This allows you to both know the status of the general job (if count of null > 0 still ongoing) and know if a finished job had errors (if count of ko > 0 has errors), without keeping an explicit general state. I've come to dread state machines when you can use actual data to calculate on the fly that same state.

We could have used Redis to keep the states, but JSON files worked fine, allowed us to TDD our way in from the beginning, and also eased bugfixing a lot, as we could just grab any failing job's "metadata" and replicate locally inside a test exactly the same job.

[1] : Actually we failed to achieve the goal, as the platform itself was working really well... but cool technology without money usually doesn't lasts.

UPDATE: A colleage from that company pointed out that my memory has issues. Corrected the second example to reflect which service we removed the DB from (the demo website), I proposed to do it also later at the template service when we built it and mixed both.

Tags: Development