The first step of generating text is, of course, pure randomness. A slightly more elaborate version would be randomly picking words from a dictionary, but of course that would still mostly generate nonsense.

A next step after pure random selection can be Markov models, which I recently covered. But in the end, is still quite blunt and memory heavy, as it will store potentially millions of repeated words.

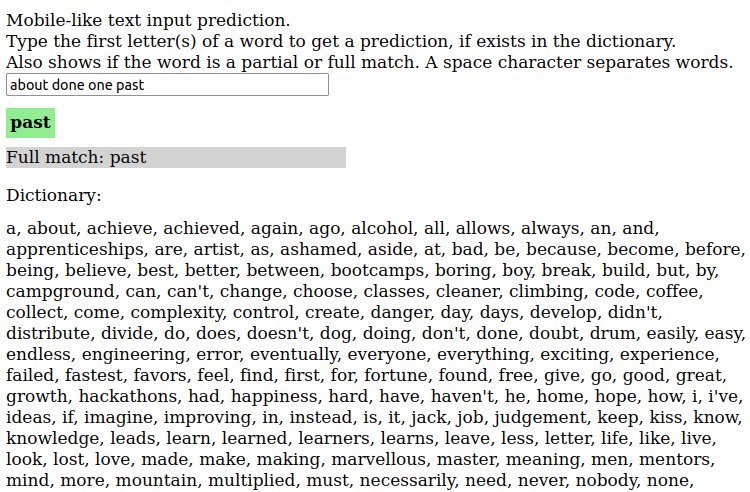

In any case, I wanted to play with another piece of the puzzle: word prediction, also known as autocomplete or word completion. In essence, given the first letters, predict the remaining ones to form a valid word. Like what mobile phones do [1].

But which word to predict when there are multiple possibilities? One most basic solution, and the one that I've implemented, is to choose by word frequency; given an input in which words are repeated, we build a dictionary where we store how many times each word appears; then, when making a prediction, we choose the word with highest frequency/probability.

To store the words dictionary and frequencies and perform queries, I've used a minimalistic trie data structure implementation. It provides just the needed functionality to allow insertion and search (partial, full and prediction-mode). It uses a simple storage mechanism: a map, where keys are the potential descendant letters, and values are the children trie nodes.

With a graph of trie nodes we can store the dictionary letter by letter, which also helps when predicting to avoid string manipulation operations.

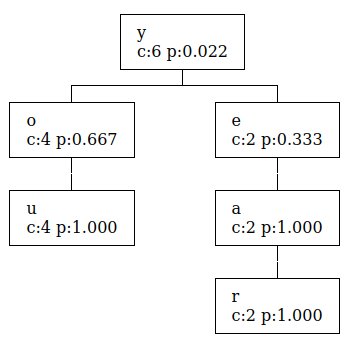

To better visualize it, check the following example, in which you and year are stored in a trie (c is count of occurrences, p is probability [0,1]):

A few notes of the simplified implementation:

- The implemented map storage structure would be both very wasteful if we were storing more complex data and require changes (key should be a hash and each node should store the data as another property), but for this example it makes writing Javascript logic quick and straightforward

- Letter lookups are fast, but the predict algorithm is not optimized; it checks all children to see which one is the most probable next letter (but not recursively, just direct descendants). We could cache/store the highest one and make it

O(1)too.

You can try it from kartones.net/demos/027/, and the source code is not minimized so it is perfectly readable and includes some comments:

- trie.mjs: the one to look at, the trie implementation

- debounce.mjs: a simple function debounce implementation

- dictionary.mjs: boring, a list of quotes and splitting them into words to feed the trie

- main.mjs: boring, just wiring everything

And this is how it looks:

[1]: And, on a much more complex scale, also similar to what Large Language Models do: predict next tokens. GitHub blog's nice explanation.